New rules for online services: what you need to know

The Online Safety Act makes businesses, and anyone else who operates a wide range of online services, legally responsible for keeping people (especially children) in the UK safe online.

This page explains:

- who the rules apply to;

- what they mean;

- if they apply to you, what you can do now; and

- other important things you should know about the rules.

You can also read our quick guides to:

- online safety risk assessments;

- our online safety codes of practice; and

- rules for services that provide online pornography.

Who the rules apply to

The rules apply to services that are made available over the internet (or ‘online services’). This might be a website, app or another type of platform. If you or your business provides an online service, then the rules might apply to you.

Specifically, the rules cover services where:

- people can create and share content, or interact with each other (the Act calls these ‘user-to-user services’);

- people can search other websites or databases (‘search services’); or

- you or your business publish or display pornographic content.

To give only a few examples, a 'user-to-user' service could be:

- a social media site or app;

- a photo- or video-sharing service;

- a chat or instant messaging service, like a dating app; or

- an online or mobile gaming service.

The rules apply to organisations big and small, from large and well-resourced companies to very small ‘micro-businesses’. They also apply to individuals who run an online service.

It doesn’t matter where you or your business is based. The new rules will apply to you (or your business) if the service you provide has a significant number of users in the UK, or if the UK is a target market.

Unsure if the rules apply to you? We can help you check

Our online tool will help you decide whether or not the new rules are likely to apply to you. Answer six short questions about your business and the service(s) you provide, then get a result.

We are still developing this tool and we welcome your feedback. We will not change the possible results, so feel free to try it now.

You need to assess and manage risks to people's online safety

If the rules apply to your service, then we'll expect you to make sure that the steps you take to keep people in the UK safe are good enough.

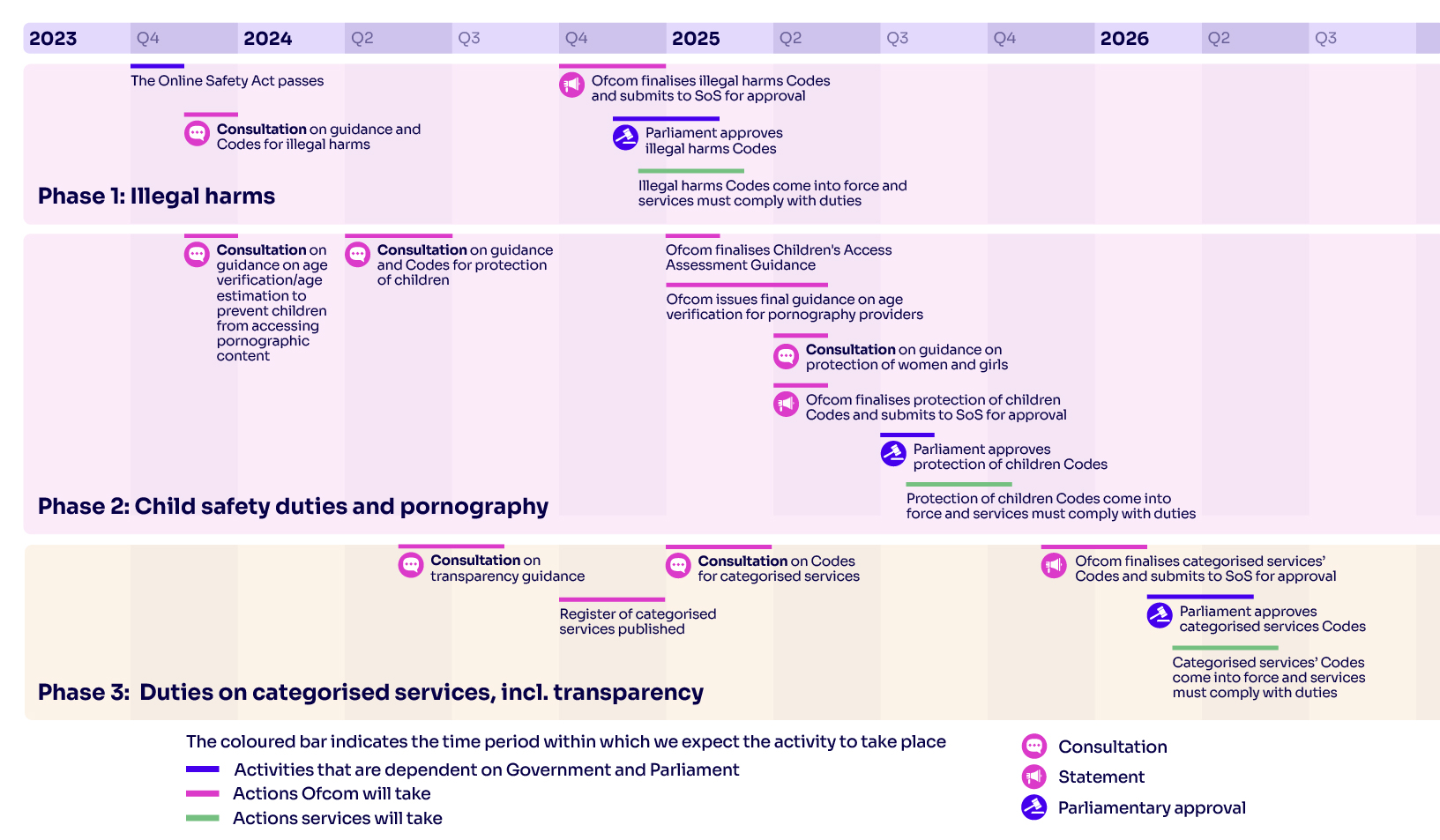

Most of the rules haven’t come into force yet – we’re taking a phased approach and we expect the first new duties to take effect at the end of 2024. But you can start to get ready now.

While the precise duties vary from service to service, most businesses will need to:

- assess the risk of harm from illegal content;

- assess the particular risk of harm to children from harmful content (if children are likely to use your service);

- take effective steps to manage and mitigate the risks you identify in these assessments – we will publish codes of practice you can follow to do this;

- in your terms of service, clearly explain how you will protect users;

- make it easy for your users to report illegal content, and content harmful to children;

- make it easy for your users to complain, including when they think their post has been unfairly removed or account blocked; and

- consider the importance of protecting freedom of expression and the right to privacy when implementing safety measures.

A few businesses will have other duties to meet, so that:

- people have more choice and control over what they see online; and

- companies are more transparent and can be held to account for their activities.

It's up to you to assess the risks on your service, then decide which safety measures you need to take.

To help you, we'll publish a range of resources – including information about risk and harm online, guidance and codes of practice.

Find out more about how to carry out an online safety risk assessment

Find out more about our codes of practice

If you think the rules apply to you, you can start getting ready now

Most of the new rules will only come into force in late 2024. But if you think they'll apply to you, here are some things you can start doing now:

Subscribe to updates from us

If you subscribe to email updates, we'll send you the latest information about how we regulate. This includes any important changes to what you need to do. You'll also be the first to know about our new publications and research.

Read more about how we propose to regulate illegal harms

We have consulted on how businesses need to protect their users from illegal harms online. We expect these requirements to come into force in late 2024.

Read a summary of our proposals (PDF, 463.4 KB) to find out how they affect you.

Read more about our approach to implementing the Act

Ofcom will implement the new rules in three phases (check the timings below). We explain each phase fully in our approach document.

Think about updating your terms of service

If you provide a user-to-user service and the rules apply to you, you should consider whether you need to make changes to your terms of service now.

In clear and accessible language, your terms of service must inform users of their right to bring a claim for breach of contract if:

- content that they generate, upload or share is taken down, or access to it is restricted, in breach of your terms of service; or

- they are suspended or banned from using the service in breach of the terms of service.

This is set out in section 72(1) of the Act. You might need to think about making further changes to your terms of service, when more of the new rules to protect users from illegal harms online come into force in late 2024.

If you receive an information request from us, respond to it

We'll send you a request for information (or 'information notice') if we need it from you as the regulator. If you get a request, you are legally required to respond to it – and we could take enforcement action against you if you don't. Find out more.

Other important things you should know

Ofcom is the regulator for online safety. We have a range of powers and duties to implement the new rules and ensure people are better protected online. We have published our overall approach and the outcomes we want to achieve.

The Act expects us to help services follow the rules – including by providing guidance and codes of practice. These will help you understand how harm can take place online, what factors increase the risks, how you should assess these risks, and what measures you should take in response. We will consult on everything we’re required to produce before we publish the final version.

We want to work with you to keep adults and children safe. We’ll provide guidance and resources to help you meet your new duties. These will include particular support for small to medium-sized enterprises (SMEs).

But if we need to, we will take enforcement action if we determine that a business is not meeting its duties – for example, if it isn’t doing enough to protect users from harm.

We have a range of enforcement powers to use in different situations: we will always use them in a proportionate, evidence-based and targeted way. We can direct businesses to take specific steps to come into compliance. We can also fine companies up to £18m, or 10% of their qualifying worldwide revenue (whichever is greater).

In the most severe cases, we can seek a court order imposing “business disruption measures”. This could mean asking a payment or advertising provider to withdraw from the business' service, or asking an internet service provider to limit access.

We can also use our enforcement powers if you fail to respond to a request for information.

You can find more information about out enforcement powers, and how we plan to use them, in our draft enforcement guidance.

The new rules cover any kind of illegal content that can appear online, but the Act includes a list of specific offences that you should consider. These are:

- terrorism offences;

- child sexual exploitation and abuse (CSEA) offences, including grooming and child sexual abuse material (CSAM);

- encouraging or assisting suicide (or attempted suicide) or serious self-harm offences;

- harassment, stalking, threats and abuse offences;

- hate offences;

- controlling or coercive behaviour (CCB) offence;

- drugs and psychoactive substances offences;

- firearms and other weapons offences;

- unlawful immigration and human trafficking offences;

- sexual exploitation of adults offence;

- extreme pornography offence;

- intimate image abuse offences;

- proceeds of crime offences;

- fraud and financial services offences; and

- foreign interference offence (FIO).

Our consultation includes our proposed guidance for these offences, while our draft register of risks (PDF, 3.2 MB) (organised by each kind of offence) looks at the causes and impact of illegal harm online.

All user-to-user services and search services will need to:

- carry out an illegal content risk assessment – we will provide guidance to help you do this;

- meet your safety duties on illegal content – this includes removing illegal content, taking proportionate steps to prevent your users encountering it, and managing the risks identified in your risk assessment – our codes of practice will help you do this;

- record in writing how you are meeting these duties – we will provide guidance to help you do this;

- explain your approach in your terms of service (or publicly available statement);

allow your users to report illegal harm and submit complaints.

One way to protect the public and meet your safety duties is to adopt the safety measures we set out in our codes of practice. We’re currently consulting on our draft codes for illegal harms. The draft code covers a range of measures in areas like content moderation, complaints, user access, design features to support users, and the governance and management of online safety risks.

In our draft codes, we have carefully considered which services each measure should apply to, with some measures only applying to large and/or risky services. The measures in our codes are only recommendations, so you can choose alternatives. But if you do adopt all the recommended measures that are relevant to you, then you will be meeting your safety duties.

If the rules apply to your service, then you will need to protect children from harm online. Some of these responsibilities are covered under the illegal content duties – such as tackling the risk of child sexual exploitation and abuse offences, including grooming and child sexual abuse material.

Some types of harmful content (which aren’t illegal) are covered by the children’s safety duties. These only apply if your online service can be accessed by children.

The Act specifies many types of content that are harmful to children, including:

- pornographic content;

- content which encourages, promotes or provides instruction for:

- suicide;

- deliberate self-injury;

- eating disorders;

- an act of serious violence against a person;

- a challenge or stunt highly likely to result in serious injury to the person who does it, or to someone else;

- content that is abusive or incites hatred towards people based on characteristics of race, religion, sex, sexual orientation, disability or gender reassignment;

- bullying content;

- content that depicts real or realistic serious violence against a person, or serious injury of a person;

- content that depicts real or realistic serious violence against an animal, or serious injury of an animal; and

- content that encourages a person to ingest, inject, inhale or in any other way self-adminster a physically harmful substance, or a substance in such a quantity as to be physically harmful.

The rules require you to treat different kinds of content in different ways, and we will explain this in our future guidance.

Carry out a children’s access assessment

If you provide a user-to-user service or search service, you’ll need to assess whether or not children can access it. If it’s likely they can access your service, then the children’s safety duties will apply.

This is a formal assessment, and Ofcom will provide guidance on how to carry it out. We expect to consult on our proposed approach in Spring 2024. You will need to carry out your first assessment once we have published our final guidance.

Assess the risks and meet your safety duties

If you have assessed and found that the children’s safety duties apply, then you’ll need to:

- carry out a children’s risk assessment which addresses how content that’s harmful to children could be encountered on your service – we will provide guidance to help you do this;

- fulfil your children’s safety duties – these include preventing children from encountering certain kinds of harmful content, other measures to protect them from harm, and managing the risks identified in your risk assessment – our children's codes of practice will help you do this;

- record how you are meeting these responsibilities in writing – we will provide guidance to help you do this;

- explain your approach in your terms of service (or publicly available statement); and

- allow your users to report harmful content and submit complaints.

One way to protect the public and meet your safety duties is to adopt the measures we set out in our children’s codes of practice. These will be specific measures for protecting children.

We expect to consult on our draft children’s codes of practice in Spring 2024, with the legal duties coming into force once we have finalised our approach.

Under the rules, a very small number of online services will be designated as 'categorised services'. These services will have additional duties to meet.

A service will be categorised according to the number of people who use it, and the features it has. Depending on whether your service is designated as Category 1, 2A or 2B, you might be expected to:

- produce transparency reports about their online safety measures;

- provide user empowerment tools – including giving adult users more control over certain types of content, and offering adult users the option to verify their identity;

- operate in line with your terms of service;

- protect certain types of journalistic content; and/or

- prevent fraudulent advertising.

In July 2023, we invited evidence to inform our approach to categorisation. We will advise the Government on the thresholds for these categories, so it can then make laws on categorisation. We expect to publish a register of these categorised services in late 2024. For more information, see our roadmap.

When implementing safety measures and policies – including on illegal harm and the protection of children – you will need to consider the importance of protecting users’ privacy and freedom of expression.

Ofcom will consider any risks to these rights when preparing our codes of practice and other guidance, and include appropriate safeguards.